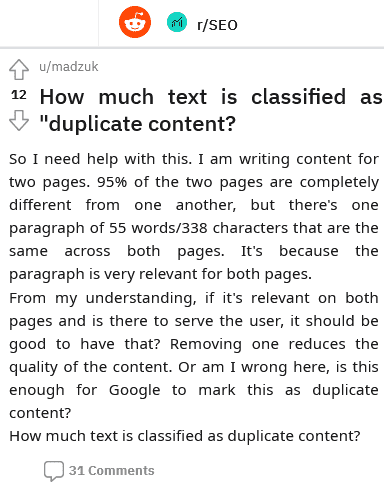

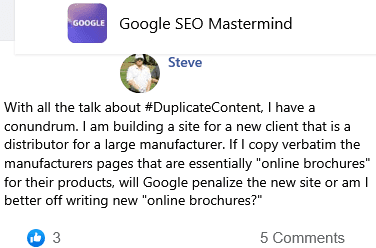

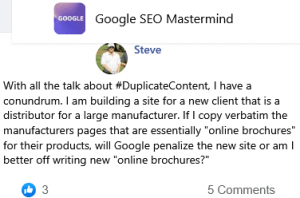

How much text is classified as "duplicate content?

So I need help with this. I am writing content for two pages. 95% of the two pages are completely different from one another, but there's one paragraph of 55 words/338 characters that are the same across both pages. It's because the paragraph is very relevant for both pages.

From my understanding, if it's relevant on both pages and is there to serve the user, it should be good to have that? Removing one reduces the quality of the content. Or am I wrong here, is this enough for Google to mark this as duplicate content?

How much text is classified as duplicate content?

31 💬🗨

📰👈

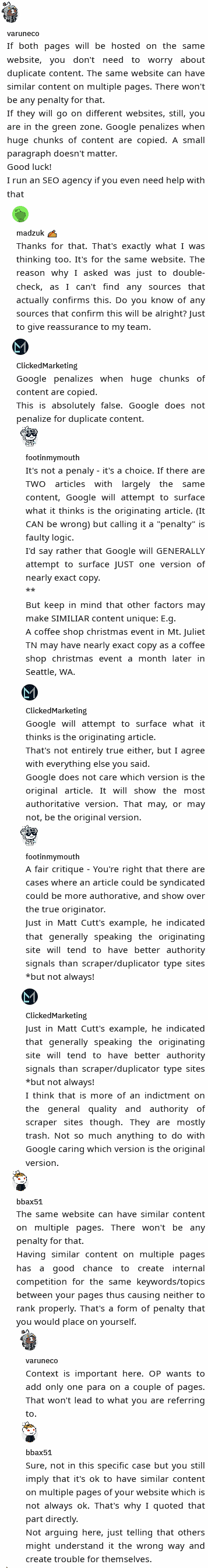

If both pages will be hosted on the same website, you don't need to worry about duplicate content. The same website can have similar content on multiple pages. There won't be any penalty for that.

If they will go on different websites, still, you are in the green zone. Google penalizes when huge chunks of content are copied. A small paragraph doesn't matter.

Good luck!

I run an SEO agency if you even need help with that

Thanks for that. That's exactly what I was thinking too. It's for the same website. The reason why I asked was just to double-check, as I can't find any sources that actually confirms this. Do you know of any sources that confirm this will be alright? Just to give reassurance to my team.

ClickedMarketing

Google penalizes when huge chunks of content are copied.

This is absolutely false. Google does not penalize for duplicate content.

It's not a penaly – it's a choice. If there are TWO articles with largely the same content, Google will attempt to surface what it thinks is the originating article. (It CAN be wrong) but calling it a "penalty" is faulty logic.

I'd say rather that Google will GENERALLY attempt to surface JUST one version of nearly exact copy.

**

But keep in mind that other factors may make SIMILIAR content unique: E.g.

A coffee shop christmas event in Mt. Juliet TN may have nearly exact copy as a coffee shop christmas event a month later in Seattle, WA.

ClickedMarketing

Google will attempt to surface what it thinks is the originating article.

That's not entirely true either, but I agree with everything else you said.

Google does not care which version is the original article. It will show the most authoritative version. That may, or may not, be the original version.

footinmymouth

A fair critique – You're right that there are cases where an article could be syndicated could be more authorative, and show over the true originator.

Just in Matt Cutt's example, he indicated that generally speaking the originating site will tend to have better authority signals than scraper/duplicator type sites *but not always!

ClickedMarketing

Just in Matt Cutt's example, he indicated that generally speaking the originating site will tend to have better authority signals than scraper/duplicator type sites *but not always!

I think that is more of an indictment on the general quality and authority of scraper sites though. They are mostly trash. Not so much anything to do with Google caring which version is the original version.

bbax

The same website can have similar content on multiple pages. There won't be any penalty for that.

Having similar content on multiple pages has a good chance to create internal competition for the same keywords/topics between your pages thus causing neither to rank properly. That's a form of penalty that you would place on yourself.

Context is important here. OP wants to add only one para on a couple of pages. That won't lead to what you are referring to.

bbax

Sure, not in this specific case but you still imply that it's ok to have similar content on multiple pages of your website which is not always ok. That's why I quoted that part directly.

Not arguing here, just telling that others might understand it the wrong way and create trouble for themselves.

📰👈

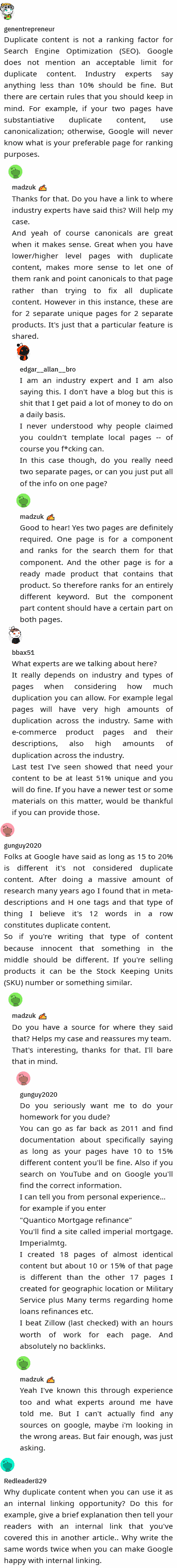

genentrepreneur

Duplicate content is not a ranking factor for Search Engine Optimization (SEO). Google does not mention an acceptable limit for duplicate content. Industry experts say anything less than 10% should be fine. But there are certain rules that you should keep in mind. For example, if your two pages have substantiative duplicate content, use canonicalization; otherwise, Google will never know what is your preferable page for ranking purposes.

Thanks for that. Do you have a link to where industry experts have said this? Will help my case.

And yeah of course canonicals are great when it makes sense. Great when you have lower/higher level pages with duplicate content, makes more sense to let one of them rank and point canonicals to that page rather than trying to fix all duplicate content. However in this instance, these are for 2 separate unique pages for 2 separate products. It's just that a particular feature is shared.

I am an industry expert and I am also saying this. I don't have a blog but this is shit that I get paid a lot of money to do on a daily basis.

I never understood why people claimed you couldn't template local pages — of course you f*cking can.

In this case though, do you really need two separate pages, or can you just put all of the info on one page

madzuk ✍️

Good to hear! Yes two pages are definitely required. One page is for a component and ranks for the search them for that component. And the other page is for a ready made product that contains that product. So therefore ranks for an entirely different keyword. But the component part content should have a certain part on both pages.

bbax

What experts are we talking about here?

It really depends on industry and types of pages when considering how much duplication you can allow. For example legal pages will have very high amounts of duplication across the industry. Same with e-commerce product pages and their descriptions, also high amounts of duplication across the industry.

Last test I've seen showed that need your content to be at least 51% unique and you will do fine. If you have a newer test or some materials on this matter, would be thankful if you can provide those.

gunguy

Folks at Google have said as long as 15 to 20% is different it's not considered duplicate content. After doing a massive amount of research many years ago I found that in meta-descriptions and H one tags and that type of thing I believe it's 12 words in a row constitutes duplicate content.

So if you're writing that type of content because innocent that something in the middle should be different. If you're selling products it can be the Stock Keeping Units (SKU) number or something similar.

Do you have a source for where they said that? Helps my case and reassures my team.

That's interesting, thanks for that. I'll bare that in mind.

Do you seriously want me to do your homework for you dude?

You can go as far back as <year> and find documentation about specifically saying as long as your pages have 10 to 15% different content you'll be fine. Also if you search on YouTube and on Google you'll find the correct information.

I can tell you from personal experience…

for example if you enter

"Quantico Mortgage refinance"

You'll find a site called imperial mortgage. Imperialmtg.

I created 18 pages of almost identical content but about 10 or 15% of that page is different than the other 17 pages I created for geographic location or Military Service plus Many terms regarding home loans refinances etc.

I beat Zillow (last checked) with an hours worth of work for each page. And absolutely no backlinks.

madzuk ✍️

Yeah I've known this through experience too and what experts around me have told me. But I can't actually find any sources on google, maybe i'm looking in the wrong areas. But fair enough, was just asking.

Redleader

Why duplicate content when you can use it as an internal linking opportunity? Do this for example, give a brief explanation then tell your readers with an internal link that you've covered this in another article.. Why write the same words twice when you can make Google happy with internal linking.

📰👈

Does Google Penalize Duplicate Content?

Are the Same Page Slug With and Without index.html Counted as Duplicate Content?

Have Some UGC Sites Got an Algorithm to Prevent a Before-Publishing Article Triggers Keyword Cannibalization or Duplicate Content?