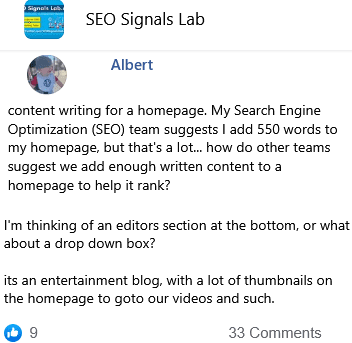

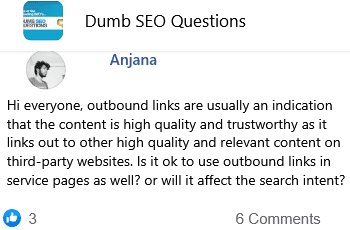

INTERNAL LINKS? I noticed something interesting in a quick Search Engine Result Page (SERP) analysis today. Sites outranking my client have much less domain authority. Our site has the highest scores across the board for link quality metrics from all the link tools. The sites outranking us have less – and worse – external links, but almost 1 million internal links going to their sub-page that's outranking my client's homepage. So, what kind of on-site bullshit are they doing to blast so many internal links? Have you seen this before? Is "internal link spam" some kind of new tactic?

26 👍🏽3 🤭29

📰👈

Wikipedia

Wouldnt that have higher domain authority?

Chris » Daniel

I was meaning interlink your site like Wikipedia.

Nathan

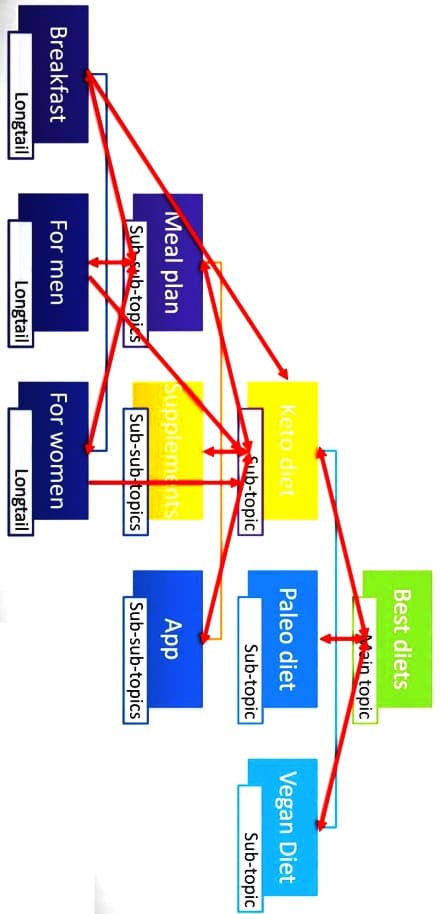

What's Interesting about Wikipedia is that they don't worry about siloing based on topics. They just internal link throughout the site

Ted

Internal linking is super super important. I've seen cases where a large site will be passing a lot of link juice to a totally meaningless page. Your competitor is intentionally or inadvertently telling Google that subpage is really important.

Bagani

so this is assuming that search engines only care about links? yeah..

subpages outranking homepage – that leaves me to believe that the subpages/service pages do have better content.

lets assume not..

magic pages if they have a ton of internal links.

Natalie

I'm assuming then that their sites have way more content too?

That's a super reasonable assumption, but in this case, the client's content is the best I've ever seen. All of the competitors in this space (personal injury lawyers) have extremely comprehensive sites with extensive content on every aspect of the law they practice. It's table-stakes to get into these SERPs.

Philip

Because domain authority is a made up number by SEO tools and not from Google. Personally, I think links have gotten a LOT less valuable than say 5 years ago, and those metrics are almost primarily driven by links.

Yes!

📰👈

Ammon Johns 🎓

1. No third-party link metric is truly reliable. They are benchmarks at best. When what you see in the Search Engine Result Page (SERP) disagrees with what you see in the tool, it is most likely the tool that is miscounting.

2. Domain Authority in particular is a bad metric for ANY purpose other than checking your own or client's internal linking is distributing it across all pages as desired.

3. Domain Authority, or any other tool's domain level metrics have NO, I repeat NO effect on ranking because Google don't even have a domain level link analysis except for their spam filtering ones. Google only calculate links at the page level. Domain Authority is a made up SEO metric purely for sorting out your internal link juice flow, siloing, etc.

4. Google had their latest big spam update only a couple of months back. These usually primarily target links and link networks. The third-party tools CANNOT know which links Google are ignoring, or even which are disavowed. Refer to point 1.

5. The most commonly blocked bots include all of the link analysis ones, Majestic, AHREFS, SEMrush, etc. Refer to point 1.

Yes!

Matt » Ammon Johns

What about the second part "million internal links" ranking weight?

Ammon Johns 🎓 » Matt

That is basically just link siloing, part of PageRank sculpting techniques, that were common practice since the Noughties, but to be done right needs that you have a million pages. More than one link to the same URL, per page, doesn't carry extra link weighting (PageRank), according to multiple Googlers over the years, although Google may (or may not) use the highest value one of the links on any page.

Darren ✍️

Thanks for all the replies, everyone. So if we're throwing out the link metrics from all the third-party link tools as completely useless, then how do we approach competitive analysis? Any links to good posts on how to approach this? I feel like we're driving blind without the ability to compare link profiles and their impact on rank.

Page level metrics are not useless, simply not entirely reliable. I'd still recommend Majestic anytime as a handy tool to at least know what sort of level, quantity, and quality of links it found.

Tapio » Darren

What I have read about your assumptions. I think one major issue is that you make too black&white conclusions.

Everything depends and is shady because we don't know exact ranking factors for example.

Accept uncertainty and make sure to approach the best possible outcome, not direct answers and on/off switches.

Darren ✍️ » Carolyn

Sure!

Prakash

My 2 cent: every 4-5 internal links are counted as 1 guest post! I have also done almost similar and my ranking have been sky rocket in few months.

📰👈

Roland

Great content and good internal linking will beat out links and semi good content all day long.

That depends entirely on the links.

Roland » Ammon Johns

Not really from my experience, if you can make great content it doesnt matter about 10 or 20x links more.

Ofcurse if you have a complete new site or your compitors also has great content. Its another topic.

Ammon Johns 🎓 » Roland

I've had entire sites, brand new, take a top spot on a competitive query (and I mean MY definition of competitive) based on the power of one really high quality link.

There is a good reason that Google have put in hundreds of spam updates that tackle link manipulation – it works.

Roland » Ammon Johns

I didny say links are useless, we use them ourself.

I said great content and no links, beat links with mediocre content. You disagree?

Ammon Johns 🎓 » Roland

Yes.

For many, many years, the #1 result for "click here" was one of relatively very few pages that contained neither of those words – the Adobe download page. So it had zero content relevant to the search made, just purely there on links.

Less well known is that Amazon, for several years, had their root domain listed, and the #1 result for their brand, but had no content at all. Instead that URL did a 302 redirect to a page with more parameters for session purposes.

Now, in the years since, Google search has been tweaked a lot to prevent Googlebombing (pointing specific anchor text links to a page to make it rank, as per "miserable failure"), reduce anchor text spam, and of course, to better process queries themselves so that 'click here' would be understood as a very different search.

But all of those are tweaks and refinements. The core functionality and power of links remains, and remains able to trump content, while the reverse is NEVER true. A URL with no links at all won't even be included, and is classified as an Orphan Page, no matter how stellar the content.

Videos with little or no textual content can rank, as can images, as can many other pages that have genuine value, a ton of links, but almost no content.

Instagram posts and pinterest boards frequently do okay in search. Not stellar compared to something that has BOTH great links and great content, but certainly better than relatively unknown content with no decent links.

If you absolutely had to choose one or the other, and couldn't have a mix of both, you should choose links every single time. It's not even close. The ONLY difference is that relatively crappy content will still tend to count for something, while relatively crappy links may count for nothing. But that's a spam control factor, introduced because of how easy it was to rank ANYTHING with links in the past.

Roland » Ammon Johns

I would agree to some extend.

But those pages not only rank for links, but also for all the other signals. On top of that, that's Hugh sites with tons of traffic from all sources. Not really fair to compare that to anything.

I would also say good links and mediocre content, will most Likely be alot more expensive than going with good content and a few links. However i would argue great content with a few links, will do better specially with time.

If you had a News page that might be irrelevant 😉

For me the answer is somewhere in the middle, just to different scenario.

Ammon Johns 🎓 » Roland

Bought links (where they are bought for cash, rather than more creative methods of 'payment) almost always fall on the 'crappy links' end of things, which is why good content so often correlates with links that have value.

There are exceptions, of course, and where a client cannot for some reason create copy around a specific phrase (sometimes legalities, such as not being able forced to refer to life insurance – the term people use – except as life assurance – the term allowed by the financial services watchdogs) it is often up to an SEO to find them.

Ghazy

Silo structure

What is Silo structure?

Ghazy » Ahmed

It's kind of internal link strategy

Ahmed » Mahmoud

Thanks!

📰👈

Shumail

Google is currently biased towards internal links, you can use maximum links with out getting any serious penalty, this is the reason I spend lots of time on internal links and plugins like link whisper.Internal links are game changer if you know how to use them smartly.

John

For local this works really good..

Yes it would. Local Search is the one kind of search where PageRank is not used. They still use links for Citation, but they are NOT weighted for PageRank.

John » Ammon Johns

Thank you so much for explaining. Was wondering why is working so good for local for years already and not always for other stuff.

Zafar

First Moz Domain Authority (DA), Page Authority (PA), Ahrefs Domain Rating (DR) or any other tools evaluation marks are not end point , you can easily manipulate them

You should analyze their backlinks and consider other factors of good backlinks like relevancy , traffic etc

In internal linking that looks impossible to have 1 million internal links on specific page , in this case you should not rely on tools and go for other factors

Alekseev

Google sees the contexts of incoming links on the page, a large number of links without context with unique value will be unaligned in terms of transferring anchor weight.

Maric

Creating a meticulous internal link network is the most powerful thing you can do.

I do it in content production already. So they are ready as soon as a page is indexed.

Have you published a resource about internal linking?

Maric » Daniel

Not yet. But working on it.

Bergen

Take a look at the crawling capabilities of various backlink checking tools. The size of the backlink index they can produce reflects the tool's ability to discover new links, but also historical links. When you see where other tools are at vs. moz, you'll understand why DA might not be the strongest indicator to use.

I would encourage you to investigate the competition with other backlink tools. Then also revisit the target competitor site every month and re-run your link checks to see if new links have come up in the index. Sometimes the tools don't show fresh links right away

Yeo

How many internal links per post do you guys recommend?

Trevor

Not enough info – if local site then proximity stuff is obv factor but scope seems like it's not a local site which I'd start investigating link metrics other than internal because the only way internal links could potentially be boosting so much is through external linking first + internal link mechanics which lots of variables there

Bogdan

I can't say I know this, but I have a strong feeling: internal links from not-indexed pages doesn't count for Google. So, if we see a website able to index a million pages, we might look at a very good website – regardless of what Moz, Ahrefs or SEMrush are saying about it.

📰👈